Document Status: [Draft|Proposal|Accepted]

Background

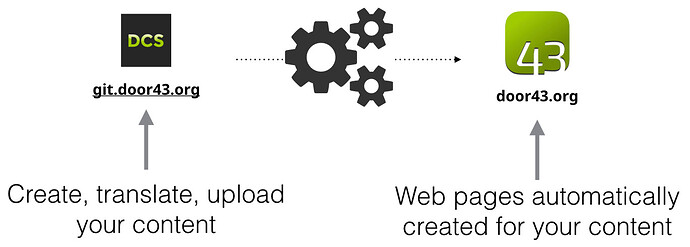

This is intended to be a description of the revised architecture for the door43.org website, especially the back end components often referred to as translationConverter (tX). The big picture as shown in the image below is still valid.

A project on the Door43 Content Service (DCS) at unfoldingWord/en_obs: unfoldingWord® Open Bible Stories - en_obs - Door43 Content Service would be converted to a page on Door43 at English: unfoldingWord® Open Bible Stories - Open Bible Stories.

Components

There are two the top level components of the conversion system:

The system illustrated above is deployed via four discrete docker containers (all of which can be deployed multiple times). The rq library isolates the components and allows for multiple job handler processes to pick up and run jobs.

If we add in the external components and simplify the detail, the end to end system looks like this:

This document will primarily describe Door43 Jobs and the tX Jobs components.

A simplified sequence diagram (omitting tX Jobs internals) looks like this:

DCS

Code: GitHub - unfoldingWord/dcs: Door43 Content Service · GitHub

Service: https://git.door43.org/

Status: Stable

The first component is the Door43 Content Service (DCS) which is the location of our content to be converted. A list of example source content repositories is at tX Conversion Types · unfoldingWord/door43.org Wiki · GitHub .

A webhook configuration on the DCS repositories notifies the door43-enqueue service when a change is made to the repository. This notification is in the form of a JSON payload sent over HTTPS.

Door43 Jobs

The Door43 Jobs component handles interaction with DCS and the customization of content needed for the Door43 website(s). The container architecture for Door43 Jobs looks like this:

Note: There is both a production version and a development version (dev-) of the above.

Door43 Enqueue

Code: GitHub - unfoldingWord/door43-enqueue-job: A webhook receiver that queues hooks for processing by a separate worker · GitHub

Service: https://api.door43.org/client/webhook to start a conversion job

Service callback: Making sure you're not a bot! to complete the conversion job

Status: Stable

Stack: Docker, Nginx, Gunicorn, Python 3, Flask API, rq

The door43-enqueue process accepts a JSON payload via a POST request and performs a set of checks on the request before adding it to the Redis queue via the rq package.

The Docker container is automatically built by Travis-CI upon successful completion of the tests – see Docker Deployment Model below. The service is configured to respond to POST requests on /. The master branch listens on port 8000 and the develop branch on port 8001. Nginx is setup as reverse proxy to map the desired service-URL (above) to those ports.

Redis

Code: Hosted service

Service: gogs-sessions.zwea7b.0001.usw2.cache.amazonaws.com:6379

Status: Stable

This is simply the database back end for the queue service. No setup is needed outside of rq package.

The queues used in this component are:

- Door43_webhook

- dev-Door43_webhook

- Door43_webhook_callback

- dev-Door43_webhook_callback

- failed

Door43 Job Handler

Code: GitHub - unfoldingWord/door43-job-handler · GitHub

Service: Not public, only listens to rq queue(s)

Status: Stable

Stack: Docker, Python 3, rq

The door43-job-handler manages the whole Door43 conversion job. It breaks down into these primary steps:

- Preprocesses the data from DCS if needed

- Interact with

txto initiate a conversion job and receive the results - Post processes the output files if needed (e.g. styling HTML)

- Upload the resultant files to the door43.org/u/ S3 bucket

Note that this component is an HTTP “client” to the tX conversion service.

tX Jobs

tX is a dedicated conversion service with as little Door43 custom code as possible. The intent is that any service can request a job and get mostly vanilla output which it could then customize if desired (e.g. styling HTML output in a specific manner).

The container architecture for tX looks like this:

The above architecture supports launching multiple Job Handler containers that all subscribe to the job queue, allowing for scaling of the long running conversion services.

Note: There is both a production version and a development version (dev-) of the above.

tX Enqueue

Code: GitHub - unfoldingWord/tx-enqueue-job · GitHub

Service:

Status: Stable

Stack: Docker, Python 3, Flask API or GitHub - encode/apistar: The Web API toolkit. 🛠 · GitHub

The enqueue component for tX handles all incoming and outgoing HTTP traffic. This component validates requests and queues them for conversion.

This service monitors the failed jobs queue and retries jobs or reports failures as needed.

Another function of the tX Enqueue service is to monitor the job metadata to notify clients via a callback when jobs are completed. In addition, it updates the job metadata in the S3 bucket where the job information is stored.

Redis

Code: Hosted service

Service: gogs-sessions.zwea7b.0001.usw2.cache.amazonaws.com:6379

Status: Stable

This is simply the database back end for the queue service. No setup is needed outside of rq package.

The queues used in this component are:

- For starting jobs:

- High priority queue

- Medium priority queue (default)

- Low priority queue

- A failed job queue

tX (HTML) Job Handler

Code: GitHub - unfoldingWord/tx-job-handler · GitHub

Service: Not public, only listens to rq queue(s)

Status: Stable

Stack: Docker, Python 3, rq, linter and converter libraries

The tX Job Handler service contains all the conversion and linter libraries necessary for executing an HTML job. This service listens to the appropriate rq queue and runs jobs that the enqueue service adds.

Conversion modules include:

- md2html - Converts Markdown to HTML (obs, ta, tn, tw, tq, misc)

- md2pdf - Converts Markdown to PDF (obs, ta, tn, tw, tq)

- md2docx - Converts Markdown to DOCX (obs, ta, tn, tw, tq)

- md2epub - Converts Markdown to ePub (obs, ta, tn, tw, tq)

- usfm2html - Converts USFM to HTML (Bible)

- usfm2pdf - Converts USFM to PDF (Bible)

- usfm2docx - Converts USFM to DOCX (Bible)

- usfm2epub - Converts USFM to ePub (Bible)

tX OBS PDF Job Handler

Code: GitHub - unfoldingWord/obs-pdf · GitHub

Service: Not public, only listens to rq queue(s)

Status: Stable (but not easily maintainable)

Stack: Docker, Python 3, rq, ConTeXt

The tX Job Handler service contains all the conversion libraries necessary for building and uploading an OBS PDF file. This service listens to the appropriate rq queue and runs jobs that the enqueue service adds.

tX (other) PDF Job Handler

Code: GitHub - unfoldingWord-dev/uw-pdf-conversion-tools: Conversion tool for converting uW resources to PDF. Written in Python · GitHub

Service: Not public, only listens to rq queue(s)

Status: Planned

Stack: Docker, Python 3, rq, converter libraries

The tX Job Handler service contains all the conversion libraries necessary for creating and uploading a PDF file. This service listens to the appropriate rq queue and runs jobs that the enqueue service adds.

S3

Code: Hosted service

Service: door43.org

Status: Stable

The eventual resting place of the project’s files is on the door43.org S3 bucket, in the /u/ directory. Publicly, these files are served by CloudFront. Assets are stored on the cdn.door43.org bucket.

Door43.org

Code: GitHub - unfoldingWord/door43.org: Source for door43.org website. · GitHub

Service: https://door43.org/

Status: Stable

Stack: Travis CI, JS, Jekyll

The door43.org website is a static website, meaning there is no server side code behind it. The site is generated with Jekyll, but this is not run on every conversion job, only when site wide JS or template changes need to be made.

Docker Deployment Model

Each Docker container in the above list is tested, built and deployed automatically. This happens for the develop branch and the master branches of each Github repository. The develop branch is deployed into a development environment and the master branch is deployed into a production environment:

Sample Flow

The working system uses mostly new (rq queuing/job-handling package using REDIS) code running on an AWS EC2 instance but still using old AWS lambda call for markdown linting (except for large markdown files over the 6MB AWS Lambda payload limit: Lambda quotas - AWS Lambda). Here is an example of how a DCS conversion proceeds:

-

A user makes updates to their project on DCS. When they push their new work, a DCS (Gitea) webhook posts a JSON bundle to https://(dev-)git.door43.org/client/webhook/.

-

The Door43-Enqueue-Job process (running in a Docker container on the AWS EC2 instance) vets the JSON payload, and if it’s all good and consistent, simply places it in a rq queue named (dev-)Door43_webhook.

-

The Door43-Job-Handler worker (currently just one, running in a separate Docker container on the same EC2 instance) fetches the JSON payload from the queue, and does the following:

-

Sets up a temp folder in the AWS S3 bucket.

-

Gathers details from the JSON payload.

-

Downloads a zip file from the DCS repo to a temp folder and unzips the files,

and then creates a ResourceContainer (RC) object. -

Creates a manifest_data dictionary,

gets a TxManifest from the DB and updates it with the details gleaned above,

or creates a new one if none existed. -

It then gets and runs a preprocessor on the files in the temp folder.

A preprocessor has a ResourceContainer (RC) and source and output folders.

It copies the file(s) from the RC in the source folder, over to the output folder,

assembling chunks/chapters if necessary.

The preprocessors can detect some particular source data errors – if so these will be appended later to the job dictionary so they can eventually be displayed to the user. -

The preprocessed files are zipped up in a temp folder

and then uploaded to the pre-convert bucket in S3.

(README.md will be copied across if no other files are found

or if that fails, a small explanatory markdown file is generated.) -

A job dictionary is now created with the important job details

and stored in a Redis dictionary (keyed by job_id).

(The former TxJob and TxModule from TxManager are no longer used.) -

An S3 CDN folder is now named and emptied.

-

A tx_payload dictionary is created, including our callback URL.

This is then POSTed to tX-enqueue at Making sure you're not a bot!.

The webhook code is finished once the tX job request is successfully submitted. -

The given payload will be appended to the ‘failed’ queue

if an exception is thrown in this webhook code, i.e., if there’s a problem getting the job submitted.

-

-

tX-Enqueue listens at Making sure you're not a bot! and accepts a post request with the following fields:

- job_id: a unique identifier for the job

- identifier (optional for HTML jobs): further information to identify this job to the Door43 callback – for PDF build requests, the identifier must be in one of the following forms: a/ ‘<repo_owner_username>–<repo_name>–’, or b/ ‘<repo_owner_username>–<repo_name>–<branch_name>–<commit_hash>’

- resource_type: the case-sensitive subject field (with underlines not spaces) exactly as defined in https://api.door43.org/v3/subjects

- input_format: one of ‘md’, ‘tsv’, ‘txt’, or ‘usfm’

- output_format: ‘html’ or ‘pdf’

- source: URL for the zipped source file(s) to be downloaded

- user_token (only required if the request is NOT coming from door43.org): a DCS user token (files in source don’t have to come via DCS, but the tX sub-system can’t just be open to every script-boy)

- callback (optional, not used for PDFs): the URL for the callback (usually Making sure you're not a bot!)

- options (optional): converter options (currently unused)

- door43_webhook_received at (optional): a datetime string – maybe be unnecessary or disabled in the future

The requested job is added to one of the (dev-) tX_webhook or tX_OBS_PDF_webhook or tX_other_PDF_webhook queues, then tX-Enqueue returns a json dictionary which includes:

* status: ‘queued’

* output: The URL where the converted result zip file will be located

* expires_at: The datetime string for when the above link will expire (1 day later)

* eta: The datetime string for the estimated time of arrival of the result (5 mins later)

* tx_retry_count: 0 -

tX-Job-Handler worker(s) accept the HTML jobs one by one from the (dev-)tX_webhook queue. The worker has the code for running all converters/linters and selects the correct functions using the resource_type (subject) field.

- A build_log dictionary is created

- The source data zip file is downloaded from the given source URL

- The correct linter and converter are chosen (by resource_type (subject), input_format, and output_format).

- The linter is run (markdown linting may still invoke an AWS lambda function)

- The converter is run

- The converted results are uploaded in a zip file to the previously advised ‘output’ URL

-

tX-OBS-PDF-Job_Handler worker(s) accept those jobs one by one from the (dev-)tX_OBS_PDF_webhook queue. This container uses ConTeXt to create an OBS PDF and upload it to (dev-)cdn.door43.org/u/<repo_owner_username>/<repo_name>/<branch_or_tag_name>/. The PDF file will be called either ‘<repo_owner_username>–<repo_name>–<tag_name>.pdf’ or ‘<repo_owner_username>–<repo_name>–<branch_name>–<commit_hash>.pdf’.

-

tX-other-PDF-Job_Handler worker(s) accept those jobs one by one from the (dev-)tX_other_PDF_webhook queue. This container uses Python to create a PDF and upload it to (dev-)cdn.door43.org/u/<repo_owner_username>/<repo_name>/<branch_or_tag_name>/. The PDF file will be called either ‘<repo_owner_username>–<repo_name>–<tag_name>.pdf’ or ‘<repo_owner_username>–<repo_name>–<branch_name>–<commit_hash>.pdf’.

-

tx-Job-Handler will POST the log file to the callback URL if given in step #4 above. (The callback is only used for the HTML conversions; not for PDFs.) The posted fields include:

- Most of the fields (except ‘callback’) echoed from the submission (#4) above

- lint_module: (name string)

- linter_success: ‘true’ or ‘false’

- linter_warnings: (list)

- convert_module: (name string)

- converter_success: ‘true’ or ‘false’

- converter_info: (list)

- converter_warnings: (list)

- converter_errors: (list)

- status: ‘finished’

- success: ‘true’

- message: ‘tX job completed’

STEPS 4,5,6,7 & 8 above form the basis of the stand-alone tX service. Anyone with a Door43 user token can post files there for linting and conversion, although this will be rate-limited for protection of the service.

-

Door43-Enqueue-Job will accept the log POSTed at its callbackURL Making sure you're not a bot!

- The job info is retrieved from Redis and matched & checked

- The zip file containing the converted file(s) is downloaded

- Templating is done

- The results are uploaded to the S3 CDN bucket

- The final log is uploaded to the S3 CDN bucket

- The new revision is deployed to the Door43 site.

-

The separate Watchtower docker container is configured to check for new updates to the above containers becoming available. If an update is detected, it us automatically pulled by Watchtower, the existing container is given a warm shut-down and then deleted, and the new updated container is started,

Stats

We gather stats on system performance to ensure that the service(s) are operating as expected. Secondarily, there may be insights to be gleaned later on about how to optimize the system or what features may be needed.

Door43 Jobs

Here is a list of stats that are being built into the Door43 Jobs side of the system:

gauges.door43.[prod|dev].enqueue-job.[webhook|callback].workers.available

gauges.door43.[prod|dev].enqueue-job.[webhook|callback].queue.length.failed

gauges.door43.[prod|dev].enqueue-job.[webhook|callback].queue.length.current

door43.[prod|dev].enqueue-job.[webhook|callback].posts.attempted

door43.[prod|dev].enqueue-job.[webhook|callback].posts.succeeded

door43.[prod|dev].enqueue-job.[webhook|callback].posts.failed

set.door43.[prod|dev].job-handler.webhook.repo_ids

set.door43.[prod|dev].job-handler.webhook.owner_ids

set.door43.[prod|dev].job-handler.webhook.pusher_ids

door43.[prod|dev].job-handler.[webhook|callback].jobs.attempted

door43.[prod|dev].job-handler.[webhook|callback].jobs.completed

door43.[prod|dev].job-handler.webhook.users.invoked.(username)

gauges.door43.[prod|dev].job-handler.types.invoked.(type/subject)

Set to 0 for success, 1 for fail (as per Linux conventions).

timers.door43.[prod|dev].job-handler.[webhook|callback|total].job.duration

tX Jobs

Here is a list of stats that are being built into the tX Jobs side of the system:

gauges.tx.[prod|dev].enqueue-job.workers.[HTML|OBSPDF|otherPDF].available

gauges.tx.[prod|dev].enqueue-job.queue.[HTML|OBSPDF|otherPDF].length.failed

gauges.tx.[prod|dev].enqueue-job.queue.[HTML|OBSPDF|otherPDF].length.current

tx.[prod|dev].enqueue-job.posts.attempted

tx.[prod|dev].enqueue-job.posts.succeeded

tx.[prod|dev].enqueue-job.posts.invalid

tx.[prod|dev].job-handler.jobs.[HTML|OBSPDF|otherPDF].attempted

tx.[prod|dev].job-handler.jobs.[HTML|OBSPDF|otherPDF].input.(input_format)

tx.[prod|dev].job-handler.jobs.[HTML|OBSPDF|otherPDF].subject.(resource_subject)

tx.[prod|dev].job-handler.callbacks.[HTML|OBSPDF|otherPDF].attempted

tx.[prod|dev].job-handler.jobs.[HTML|OBSPDF|otherPDF].completed

timers.tx.[prod|dev].job-handler.job.[HTML|OBSPDF|otherPDF].duration

OLD tX API Example Usage (needs updating or maybe just deleting)

The tX conversion platform is available via an RESTful API. First, we’ll cover authentication, then show examples of using the API.

Authentication

tX uses HTTP Basic Auth over HTTPS. You’ll need to submit your Door43 username and password credentials with every request.

We plan to support Gogs user tokens eventually. You may generate a token by signing in to your git.door43.org account, click the down arrow in the upper right (next to your avatar), select “Your Settings”. Click on “Applications” in the left hand bar, then select “Generate New Token”. Name the token “tX” and click “Generate Token”. Your token will be displayed at the top of the page in a light blue box (it will look something like ‘d075e12826a327437800b882ae8724120b060f1a’) . Record it in a safe location for your use.

Examples

Listing Root

Perform a GET request against https://api.door43.org/tx to determine what endpoints and methods are allowed.

Request:

GET https://api.door43.org/tx

Response:

200 OK

{

"version": "1",

"links": [

{

"href": "https://api.door43.org/tx/job",

"rel": "list",

"method": "GET"

},

{

"href": "https://api.door43.org/tx/job",

"rel": "create",

"method": "POST"

},

...

Listing Jobs

To list your jobs, perform the following example. Note that if you haven’t created any jobs yet then your list will be empty.

Request:

GET https://api.door43.org/tx/job?user_token=<gogs_user_token>

or

POST https://api.door43.org/tx/job

{

“user_token”: “<gogs_user_token>”

}

Response:

200 OK

{

"job": [

{

"job_id": "b2df28c8-7696-11e6-a03d-278f51ff8a84",

"created_at": "2016-12-27T16:32:00Z",

"eta": "2016-12-27T16:33:00Z",

"callback": "https://your.url/for_job_complete_update",

"resource_type": "obs",

"input_format": "md",

"output_format": "pdf",

"options": {

"line_spacing": "120%"

},

"links": [

{

"href": "https://api.door43.org/tx/job/f9912771-768b-11e6-9f9b-2bbf72889dc8",

"rel": "self"

"method": "GET"

}

]

}

]

"links": [

{

"href": "https://api.door43.org/tx/job",

"rel": "create",

"method": "POST"

}

]

}

Create a Conversion Job

Your request should contain a link to the source file(s) to be converted. The tX app will use these link to get the source content to be converted. Eventually, some time of multi-part POST will be accepted, which will allow you to send your data with your request. In this case, the first part should contain the binary representation of the data to be converted and the second part should contain a JSON object with your requested conversion options.

You may provide a callback URL, which should be an endpoint that you want tX to make a POST request to that will notify your code that the conversion job is complete. This is optional, your client is also free to GET the output URL as many times as you like until the content is available.

An example of the JSON object may be seen below (user_token can be in the URL or the JSON payload):

Request:

POST https://api.door43.org/tx/job?user_token=<gogs_user_token>

{

"user_token": "<gogs_user_token>",

"resource_type": "obs",

"input_format": "md",

"output_format": "pdf",

"source": "https://git.door43.org/username/project/archive/master.zip",

"callback": "https://your.url/for_job_complete_update",

"options": {

"line_spacing": "120%"

}

}

Response:

201 Created

{

"job": {

"job_id": "b2df28c8-7696-11e6-a03d-278f51ff8a84",

"created_at": "2016-12-27T16:32:00Z",

"eta": "2016-12-27T16:33:00Z",

"source": "https://git.door43.org/username/project/archive/master.zip",

"callback": "https://your.url/for_job_complete_update",

"resource_type": "obs",

"input_format": "md",

"output_format": "pdf",

"output": "https://cdn.door43.org/tx/jobs/b2df28c8-7696-11e6-a03d-278f51ff8a84.zip",

"output_expiration": "2016-12-28T16:32:00Z",

"options": {

"line_spacing": "120%"

},

"status": "requested",

"success": null,

"links": [

{

"href": "https://api.door43.org/tx/job/b2df28c8-7696-11e6-a03d-278f51ff8a84",

"rel": "self"

"method": "GET"

}

]

},

"links": [

{

"href": "https://api.door43.org/tx/job",

"rel": "list",

"method": "GET"

},

]

}

Note that if you specify invalid options in your request then you will receive a 400 Bad Request response with a JSON payload similar to:

Response

{

"errorMessage": "resource_type unknown",

}

Conversion Job Output

To get the output from your conversion job, simply make a request to the output URL recorded above. If the document is not available yet then the server will return a 404 Not Found message.

If you provided a callback URL then tX will notify your code when the output file is available with a JSON in the body of a POST to your callback such as the following:

{

"job_id": "b2df28c8-7696-11e6-a03d-278f51ff8a84",

"identifier": "<the unique identifier you sent in the job request if you specified one>",

"success": true, # true = was able to convert files even if had warnings, false = failed to convert

"status": "success", # See "Job Status"

"message": "Converted successful", # A message about the status

"output": "https://cdn.door43.org/tx/jobs/b2df28c8-7696-11e6-a03d-278f51ff8a84.zip",

"output_expiration": "2016-12-28T16:32:00Z",

"log": ['Started converstion', ..., "Completed conversion"],

"warnings": [],

"errors": [],

"created_at": "2016-12-27T16:32:00Z",

"started_at": "2016-12-27T16:32:21Z",

"ended_at": "2016-12-27T16:33:13Z"

}

tX API Example Usage

The tX conversion platform is available via an RESTful API. First, we’ll cover authentication, then show examples of using the API.

Authentication

You may generate a Gogs user token by signing in to your git.door43.org account, click the down arrow in the upper right (next to your avatar), select “Your Settings”. Click on “Applications” in the left hand bar, then select “Generate New Token”. Name the token “tX” and click “Generate Token”. Your token will be displayed at the top of the page in a light blue box (it will look something like ‘d075e12826a327437800b882ae8724120b060f1a’) . Record it in a safe location for your use.

[example deleted Feb2020]